The internet has a tendency to explode whenever someone casually claims, “AGI is here”—and this time was no different. The spark came from Jensen Huang during the Lex Fridman Podcast, where he made a bold statement: “I think we’ve achieved AGI.” That single comment was enough to reignite one of the biggest debates in tech today. Are we truly on the edge of Artificial General Intelligence, or are we just getting carried away by rapid AI progress? To answer that, we first need to understand what AGI actually means—and how it differs from the AI we use today.

What Is AGI and How Is It Different from AI?

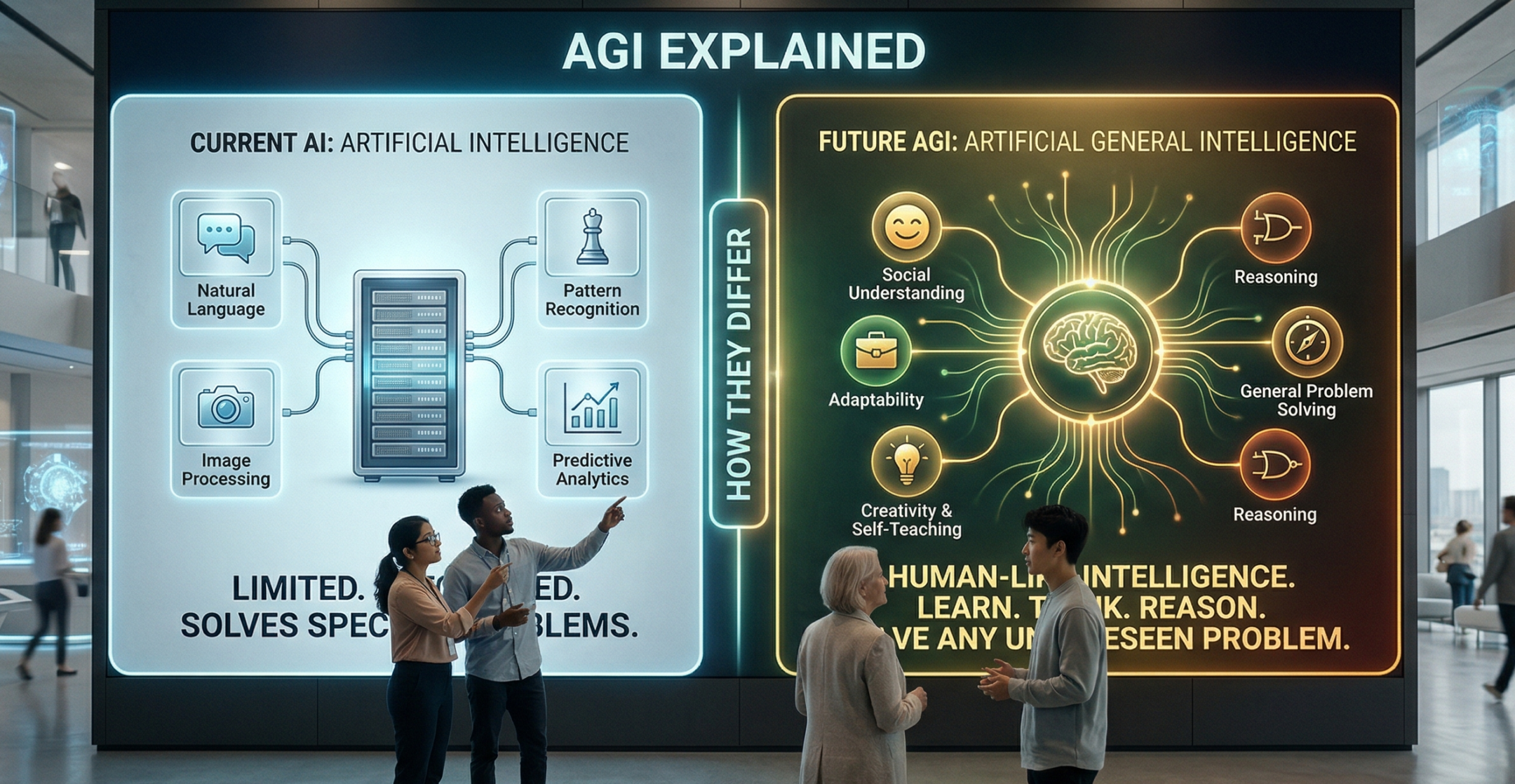

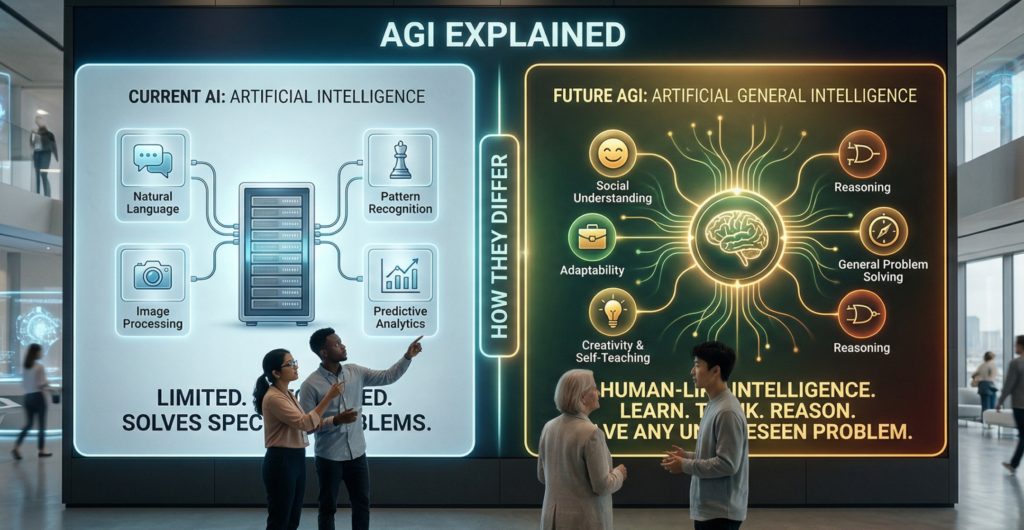

Artificial General Intelligence (AGI) refers to a level of machine intelligence where systems are no longer limited to specific tasks. Instead, they can think, learn, and adapt across multiple domains—much like humans. Unlike today’s AI, which is designed for narrow purposes, AGI would be capable of writing code, solving unfamiliar problems, making decisions, and even learning new skills without needing retraining.

Most of the technology we use today falls under Narrow AI. These systems are highly efficient but limited:

- Voice assistants can set reminders

- Recommendation engines suggest content

- Translation tools convert languages

However, they all operate within fixed boundaries. Step outside those boundaries, and their performance drops. AGI, on the other hand, is designed to break those limits, enabling machines to transfer knowledge, reason through new situations, and handle tasks they were never explicitly trained for.

👉 In simple terms:

AI is a specialist, while AGI aims to be a true generalist.

Different Definitions, Different Opinions

Interestingly, not everyone agrees on what qualifies as AGI. Jensen Huang’s perspective leans toward capability—if an AI can perform complex, high-level tasks (like running a billion-dollar company), does that count as AGI?

But many experts disagree.

Researchers like:

- Geoffrey Hinton

- Yann LeCun

- Yoshua Bengio

believe AGI should match or exceed human intelligence across nearly all cognitive tasks, including reasoning, learning, and problem-solving in completely new situations.

Meanwhile, Demis Hassabis suggests AGI could arrive within the next 5–8 years, but only with major breakthroughs. On the other hand, Elon Musk has hinted at a much shorter timeline, possibly within just a few years.

👉 The reality? There’s no clear consensus.

AI vs AGI: Key Differences

| Aspect | Artificial Intelligence (AI) | Artificial General Intelligence (AGI) |

|---|---|---|

| Definition | Designed for specific tasks | Can perform any intellectual task like a human |

| Capability | Limited to predefined domains | Works across multiple domains |

| Learning | Learns within training data | Learns and adapts independently |

| Flexibility | Cannot transfer knowledge easily | Transfers learning across tasks |

| Examples | Chatbots, voice assistants, recommendation systems | Still theoretical (no true AGI yet) |

| Thinking | Lacks true reasoning | Aims for human-like reasoning and creativity |

So, Are We Close to AGI?

Right now, we’re somewhere between hype and reality. Modern AI is incredibly powerful—it can write articles, generate code, analyze data, and even mimic human conversation convincingly. But that doesn’t necessarily mean it understands the world the way humans do.

The biggest challenge is that the definition of AGI keeps evolving. What one expert considers “AGI,” another might see as just an advanced form of AI.

👉 And that’s exactly why every time someone claims “AGI is here,” the internet reacts the way it does—not because everyone agrees, but because no one fully does.

Final Thoughts

AGI remains one of the most exciting—and controversial—goals in technology. While we’re making rapid progress, true AGI—machines that can think, learn, and adapt like humans across any situation—still seems just out of reach.